314. Neural Network Optimization

Build your own deep neural network image compressor and tune it to peak performance

You can enroll below for free or, if it's easier, unlock the entire End-to-End Machine Learning Course Catalog for 20 USD.

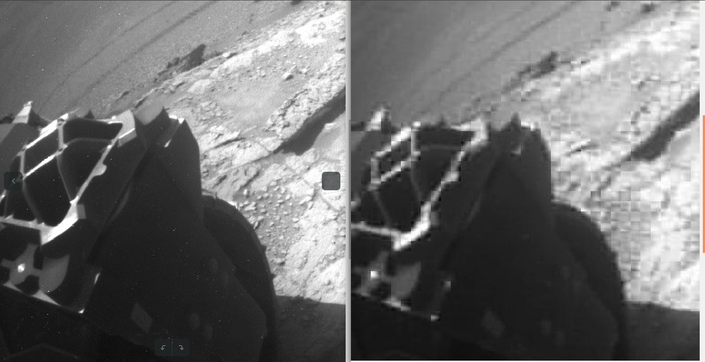

In this course we'll focus on tuning our neural network up for optimal performance in a practical application - compressing imagery from the Mars Rover for efficient transmission back to Earth. We'll demonstrate the importance of having a well-defined goal and performance measurement. We'll also show how to use profiling to find slow spots in our code, and how to widen out those bottlenecks without resorting to GPUs. (All the code is sized to run on your local machine.)

At the core of this course is hyperparameter optimization, a non-convex optimization problem in a hybrid continuous-discrete parameter space (read as "hard"). Since we can't rely on gradient descent for this, we'll explore other options and show their benefits and weaknesses on our Martian imagery compression problem. By the time we're done, you'll have a solid grasp of a rich hyperparameter optimization toolkit including grid search, random search, and Powell's method.

I recently completed the course 314 NN Optimization from @_brohrer_ and formed my opinion.

I want to say that this is one of the two best resources about the optimization of Neural Networks that I have seen.

Chapter 4 and 6 I liked the most.

I recommend this course.

#DeepLearning

— Deran

Your Instructor

I love solving puzzles and building things. Machine learning lets me do both. I got started by studying robotics and human rehabilitation at MIT (MS '99, PhD '02), moved on to machine vision and machine learning at Sandia National Laboratories, then to predictive modeling of agriculture DuPont Pioneer, and cloud data science at Microsoft. At Facebook I worked to get internet and electrical power to those in the world who don't have it, using deep learning and satellite imagery and to do a better job identifying topics reliably in unstructured text. Now at iRobot I work to help robots get better and better at doing their jobs. In my spare time I like to rock climb, write robot learning algorithms, and go on walks with my wife and our dog, Reign of Terror.